FEATURED

Building the Decision Stack

Establishing the data foundation enterprise decisions depend on.

→ Enabled scalable asset management and risk prioritization across 30K+ assets, improving program assignment and operational efficiency

Platform Architecture · Systems of Record · Enterprise Scale

Building Systems That Scale Decisions

The Three Layers of Durable Scale

Complex platforms don’t fail from missing features — they fail from missing structure. I design the systems, data models, and governance that turn enterprise complexity into clear, scalable decisions.

Each case study shows how I build that structure in practice:

Durable foundations

Governed intelligence

Disciplined ecosystem scale

Together, they show how I turn enterprise signals into decisive, scalable outcomes.

Designing Trustworthy AI

Engineering AI systems that increase velocity while preserving trust.

→ Reduced manual triage by 70% across 200+ organizations

AI Governance · Risk Architecture · Privacy Controls · Executive Impact

Scaling a Marketplace Through Governance Systems

Architecting marketplace systems where incentives and execution reinforce each other.

→ Exceeded multi-year NPS target (58 vs. goal of 50) and increased program participation by 27% YoY

Marketplace Strategy · Roadmap Governance · Operational Scale

CASE STUDY 1

FOUNDATION

Building the Decision Stack

Architecting the foundation for enterprise-scale decision velocity.

Platform Architecture · Systems of Record · Enterprise Scale

My Role: Led product strategy and execution to build a canonical system of record, standardize risk prioritization, and enable scalable decision-making.

The Environment

As enterprise security complexity increased—driven by expanding attack surfaces, fragmented tooling, and rising vulnerability volume—decision-making slowed.

Fragmented asset visibility

Increasing signal volume

Manual and inconsistent prioritization

II. Standardization Enables Scale

Risk normalization embedded into core workflows.

The Problem

The challenge wasn’t more data—it was fragmented systems and workflows.

Without a canonical system of record:

Inconsistent, hard-to-compare risk signals

Workflows couldn’t scale across teams

Automation would amplify noise instead of improving outcomes

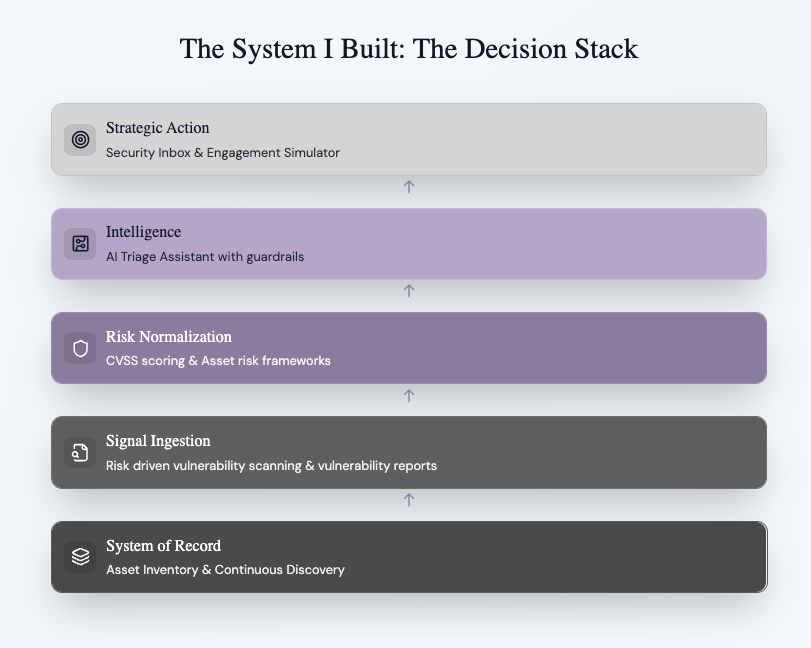

I. Foundation Before Acceleration

Canonical asset models before automation.

III. Workflow Before AI

Optimized for human cognition before acceleration.

IV. Automation as a Final Layer

AI introduced only once governance and inputs were durable.

→

What I Built

Built a canonical system of record for asset and vulnerability data

Standardized risk normalization (CVSS → VRT mapping)

Scaled asset management and program assignment workflows

Established a clean data foundation for AI and automation

The Architecture

Built in a live system — not a greenfield rebuild.

Data models > feature velocity

Normalize risk before automation

Governance before AI

Long-term scale > short-term speed

IMPACT

Enabled continuous visibility across 30K+ enterprise assets

Reduced manual prioritization through automated risk normalization

Scaled program workflows and asset assignment across enterprise customers

The Tradeoffs

CASE STUDY 2

AUTOMATIONS

Designing Trustworthy AI

From Signal to Trusted Remediation Velocity

Designing human-in-the-loop AI to improve triage speed without compromising trust.

AI Governance · Risk Architecture · Privacy Controls · Executive Impact

The Challenge

AI can accelerate prioritization—but without governance, it increases risk.

High vulnerability volume

Manual triage bottlenecks

Inconsistent severity decisions

High sensitivity to enterprise risk

We needed trusted automation—not faster noise.

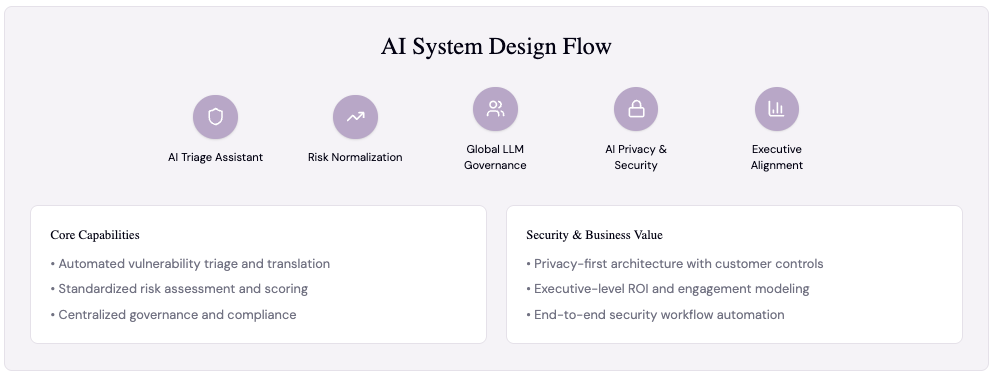

AI Architecture

I. Standardize Before Acceleration

Embedded CVSS → VRT mapping before introducing AI

II. Centralized LLM Governance

Centralized controls and governance guardrails

Tradeoffs We Made

> Scoped AI to assistive triage (not full automation)

> Embedded privacy and governance from day one

> Sequenced rollout after control frameworks were validated

What I Built

> Led productization of AI Triage Assistant from early capability to enterprise-ready product

> Embedded AI into triage workflows for contextual, in-platform decision-making

> Established governance, privacy, and LLM control frameworks for safe enterprise adoption

> Owned GTM rollout and drove adoption across enterprise customers

> Delivered continuous improvements through post-launch iteration and enhancements

AI was introduced only after establishing structured data, governance, and workflow integrity.

III. Privacy by Default

Privacy-first architecture built into the core platform

IV. Executive-Linked Outcomes

Modeled ROI to secure executive alignment and sustained investment

Enterprise Impact

Delivered measurable operational leverage at enterprise scale:

> 70% manual triage workload reduction

> 200+ AI-augmented organizations

> 20% → 27% week-over-week adoption increase during phased rollout

Published Materials & Thought Leadership

> Turn vulnerability data into remediation velocity: Introducing Bugcrowd AI Triage Assistant

CASE STUDY 3

MARKETPLACES

Scaling a Marketplace Through Governance Systems

Scaling a multi-sided security marketplace through structured systems—not feature sprawl.

Marketplace Strategy · Roadmap Governance · Operational Scale

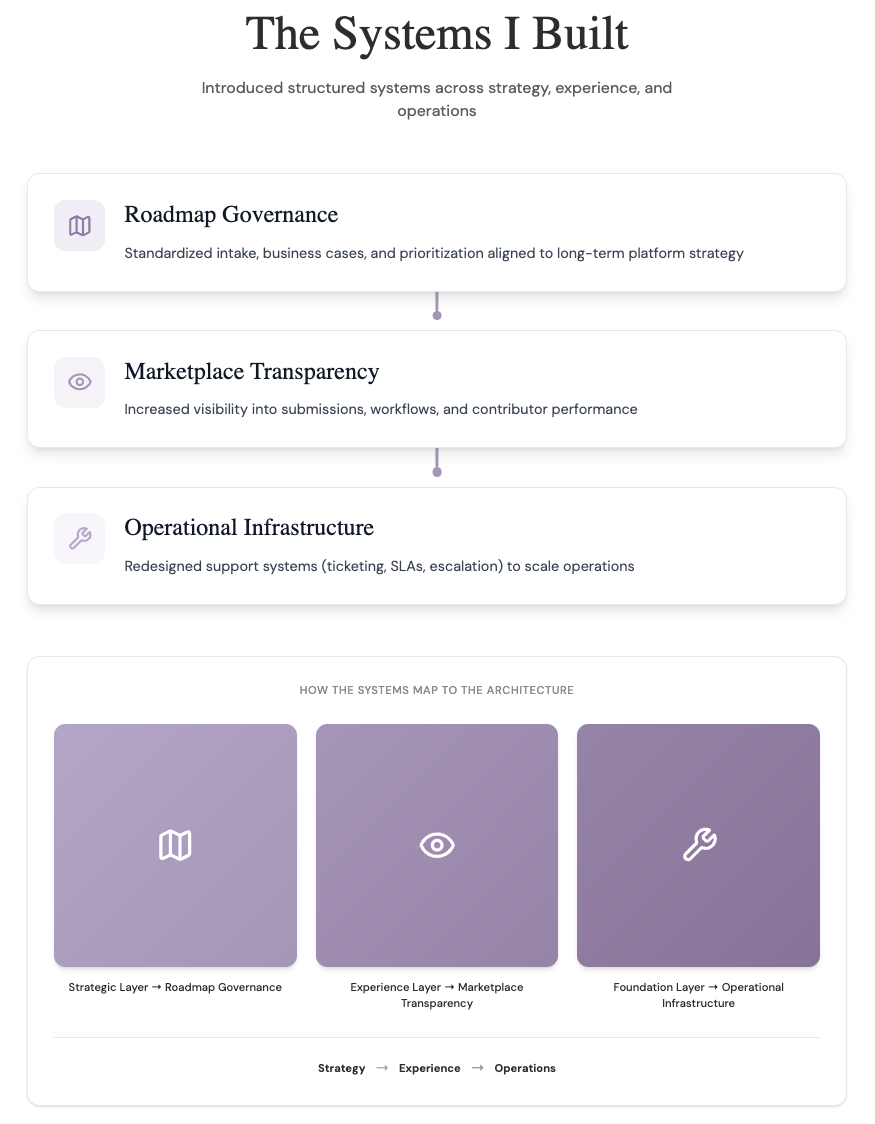

What I Built

Built governance and operational systems to scale a two-sided marketplace of 30K+ researchers and enterprise customers

Designed roadmap governance (intake, business cases, prioritization) to align investments to platform strategy

Launched incentive programs (P1 Warriors, Bounty Slayers, MVP) to drive participation and signal quality

Led global rollout of Support & Customer Success platform (Freshdesk Omni), redesigning routing, SLAs, and escalation workflows

Improved platform usability to reduce friction in program and engagement management

The Problem

As the marketplace scaled, complexity increased across both sides:

Competing enterprise, researcher, and internal priorities

Limited visibility into marketplace performance and workflows

Strained support, escalation, and triage systems

Inconsistent customer and researcher experience

Roadmap capacity constraints

Design Principles

Standardized workflows before scaling

Prioritized platform integrity over customization

Balanced enterprise needs with marketplace equity

Invested in internal systems to reduce downstream friction

Used data to drive prioritization and operational decisions

27% YoY increase in submission volume

Launched incentive systems to drive participation

To scale the marketplace, I introduced structured systems across roadmap, experience, and operations.

Impact

These systems transformed the marketplace from reactive operations into a scalable, data-driven platform.

25% annual growth in qualified researcher pool

Expanded and retained high-quality contributorsReduced backlog from 10,000+ → 600 tickets

Accelerated triage and improved marketplace liquidityImproved customer experience (NPS 50 → 58)

Delivered through workflow, UI, and operational improvements